Newswise — A significant technical pain point in Domain Generalization(DG)is the reliance on learning a universal yet fixed sample-to-label function. Most current approaches focus on domain-invariant representation(DIR), attempting to ignore domain-specific variations to find a common mapping. However, such fixed functions often overlook intrinsic, task-relevant information unique to individual domains,leading to poor adaptability when the target domain exhibits significant distribution shifts. Because invariant features are induced solely from observed sources, they offer only relative invariance, failing to capture the discriminative nuance required for robust performance in unknown environments.

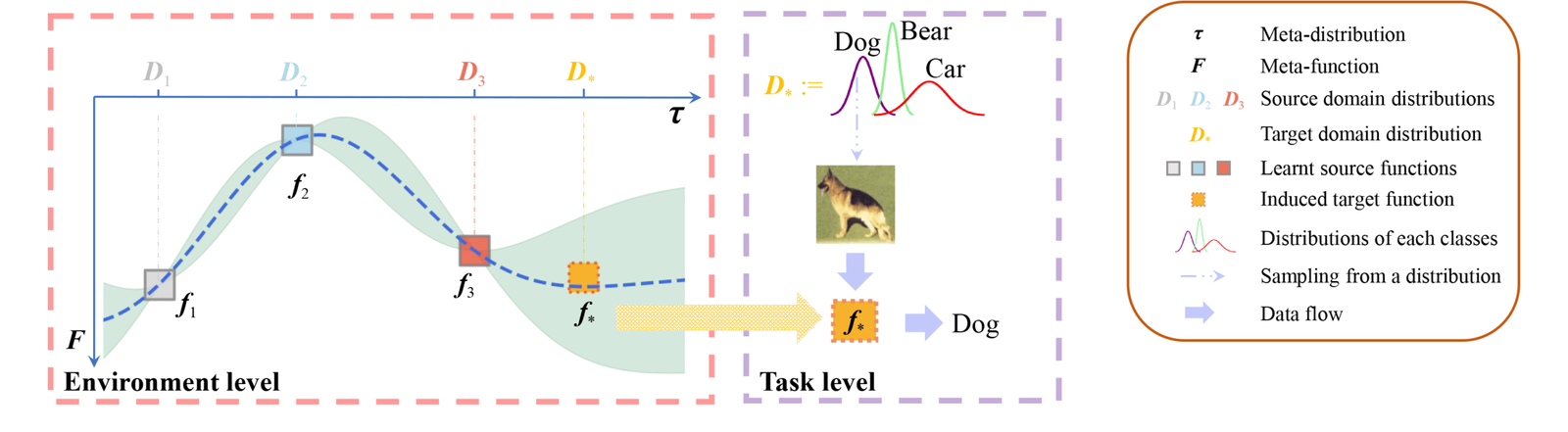

In response to these challenges,the research team from Nanjing University of Aeronautics and Astronautics developed the GPDG framework. This innovation treats the environment as a nexus where domains are regarded as meta-samples drawn from a meta-distribution. Instead of a single static model, GPDG establishes a meta-function—a function over functions—that maps environment-level distributions to specific task functions. By utilizing a Gaussian process with a carefully constructed kernel, the framework captures inter-domain correlations, allowing it to effectively induce a tailored function for an unseen domain based on its statistical characteristics, thereby moving beyond the constraints of universal invariance.

Research indicates that in experiments on C-MNIST, PACS, VLCS, and DomainNet datasets, GPDG provides superior generalization capabilities compared to traditional optimization and meta-learning baselines. Data analysis suggests that the inclusion of a domain augmentation strategy further refines the model’s decision boundaries,ensuring smoother transitions and higher accuracy in diverse scenarios. upported by a PAC-Bayesian theoretical bound, this work offers a reliable and flexible paradigm for building intelligent systems that can rapidly adapt to the uncertainties of real-world environments without relying on rigid, fixed representations.

https://journal.hep.com.cn/fcs/EN/10.1007/s11704-025-41278-4